Stop Letting WordPress’s Robots.txt Misguide Search Engines—What You Should Really Include

WordPress’s default robots.txt file is a mess that can tank SEO efforts by blocking crucial content from search engines. The file needs proper placement in the root directory, clear sitemap URLs, and careful management of wildcards to avoid accidental content blocking. Regular monitoring guarantees crawlers can access valuable pages while staying away from admin sections and thank-you pages. Smart robots.txt configuration makes the difference between stellar visibility and digital obscurity – there’s more to this story.

While many WordPress users obsess over flashy themes and trendy plugins, they often overlook a humble yet vital file that can make or break their site’s SEO success – the robots.txt file. This unassuming text file serves as a virtual bouncer, telling search engine crawlers what they can and can’t access on your site. And boy, do people mess it up.

The biggest blunder? WordPress generates a virtual robots.txt file by default, and it’s about as helpful as a screen door on a submarine. It can actually discourage search engines from indexing your site properly. Smart move, right? Using Yoast SEO Premium can help simplify and streamline your robots.txt management.

WordPress’s default robots.txt is like putting training wheels on a rocket – it holds back your site’s true SEO potential.

Even worse, countless site owners stick their robots.txt file in the wrong directory, making it completely invisible to search engines. It’s like putting up a “No Trespassing” sign in your basement – utterly pointless. The file must be placed in the root directory for search engines to properly read and follow its instructions.

Let’s get real about what belongs in this file. Initially, your sitemap URL needs to be there. Period. Search engines love sitemaps – they’re like valuable maps leading to your content. Understanding your backlink profile can help determine which pages deserve priority in your sitemap.

Next, those wildcards (*) everyone loves throwing around? They’re not confetti. Use them wrong, and you’ll accidentally block half your site from being indexed. Oops.

The true power of robots.txt lies in its ability to reduce server load and prevent index bloat. Think of it as traffic control for your website. You want search engines crawling your money-making content, not your thank-you pages or admin sections.

And for heaven’s sake, stop blocking your significant directories. Nothing kills SEO faster than telling Google to ignore your best content.

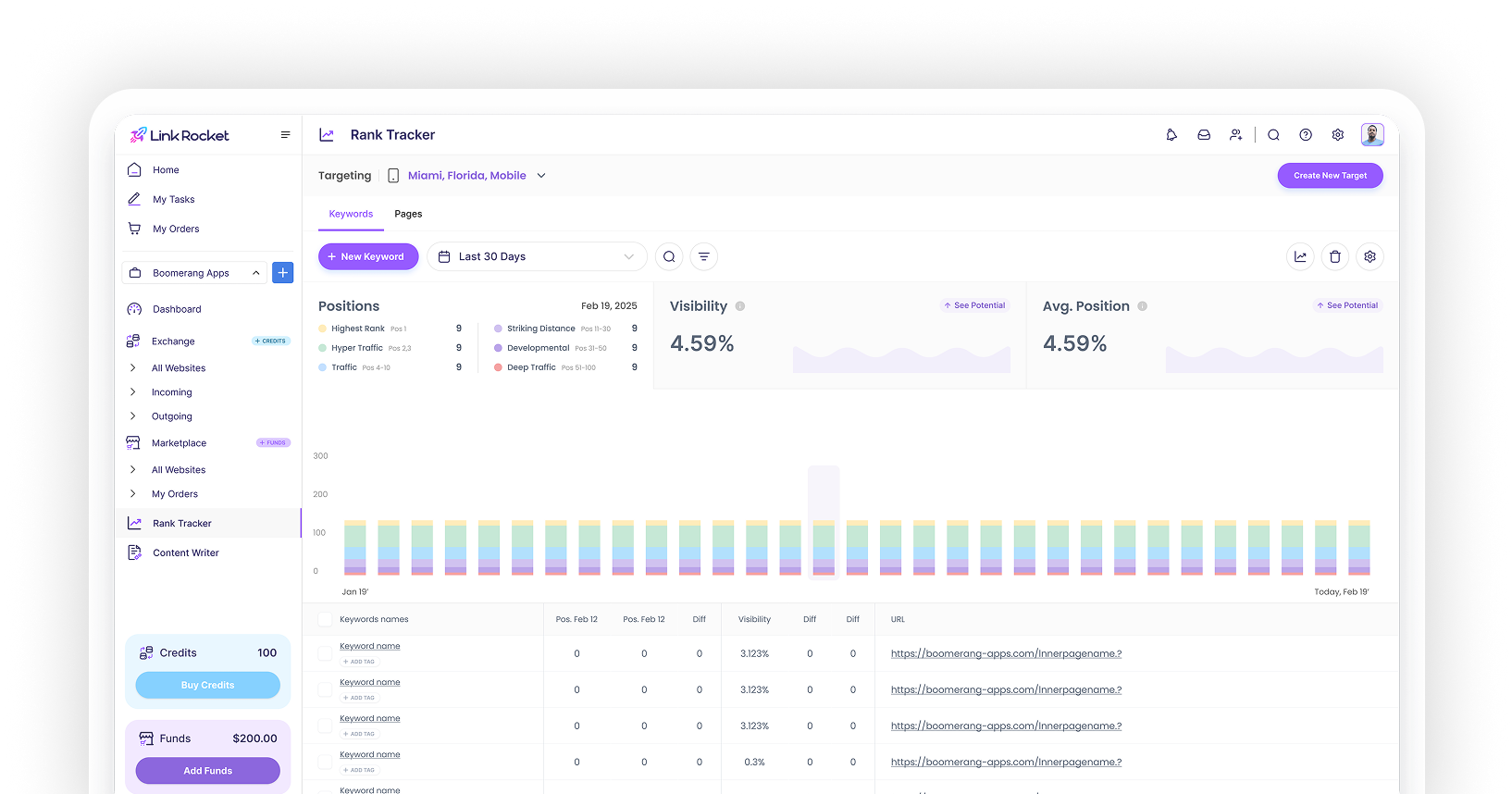

Tools like AIOSEO can help manage this vital file, but don’t just set it and forget it. Regular reviews are key because websites change, and yesterday’s perfect robots.txt might be today’s SEO nightmare.

The key is striking that perfect balance – blocking the right stuff while ensuring your valuable content gets the attention it deserves. Remember, in the online realm, even the smallest file can have the biggest impact.