Why Everything You Know About SEO Testing Is Useless Without These GEO Experiments

Traditional SEO testing is basically dead weight in the AI landscape. Those A/B tests? Useless. Backlinks? Nobody cares. GEO experiments with over 10,000 queries prove that AI engines work completely differently—they triangulate information from multiple sources and prioritize factual consistency, not click-through rates. Companies are celebrating website traffic while competitors dominate AI responses. The old playbook of keyword stuffing and two-week testing windows means nothing when large language models decide what information users actually see.

While SEO professionals have spent decades mastering the art of pleasing Google’s algorithms, a new player has entered the game—and it doesn’t care about your backlinks. Generative Engine Optimization, or GEO, operates on completely different rules. Those inverted indexes and link graphs that SEO folks worship? Meaningless. GEO runs on vector space models and attention mechanisms that prioritize semantic understanding over traditional metrics.

The testing methodologies that worked for SEO are fundamentally obsolete now. Sure, manipulating title tags and meta descriptions still matters for search rankings. A/B testing, controlled variables, random page selection—all that traditional stuff helps websites climb Google’s ladder. But none of it translates to getting cited in AI-generated answers. That’s the brutal truth nobody wants to hear.

GEO experiments reveal something shocking: keyword stuffing actually hurts performance. Remember when everyone crammed keywords everywhere? Yeah, that’s poison for GEO. Instead, AI systems want detailed statistics, authoritative quotes, and proper citations. Content lacking clear structure or semantic HTML5 tags gets completely ignored by these inference engines, regardless of how well it ranks in traditional search.

The GEO-Bench methodology, with its 10,000+ queries across domains, proves this definitively. Large language models prioritize information gain and factual consistency. They triangulate multiple sources. They don’t care about click-through rates.

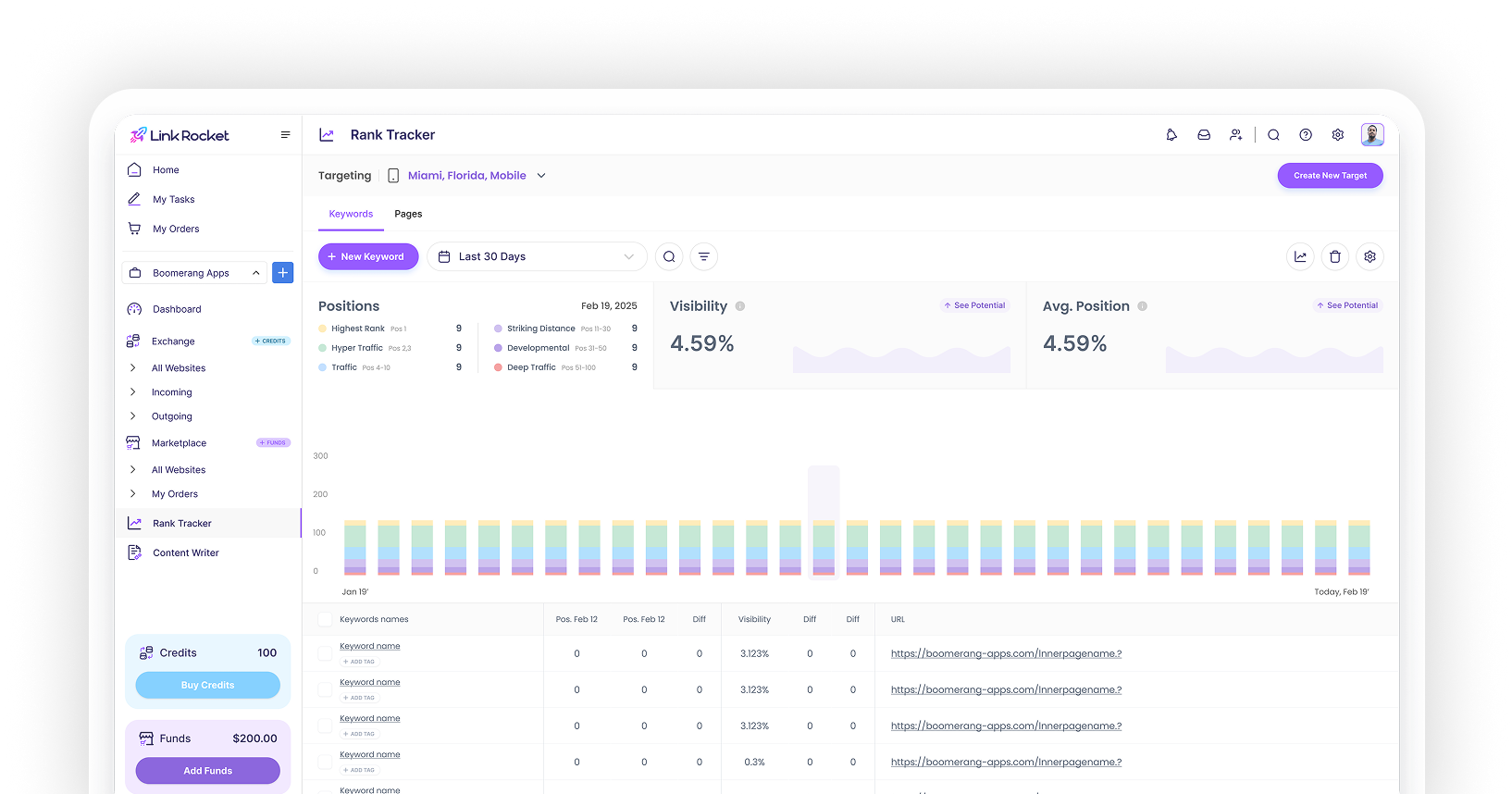

The measurement game has changed too. Organic traffic and keyword ranks meant everything in SEO land. Now it’s about brand share of voice and citation frequency inside AI responses. Traditional SEO tests require 2-4 weeks minimum to account for variability and algorithm fluctuations before declaring meaningful results. Companies tracking the wrong metrics are effectively flying blind. They’re celebrating website visits while their competitors dominate AI conversations.

Here’s where it gets interesting, though. Quality content wins in both worlds. E-E-A-T principles—Experience, Expertise, Authoritativeness, Trustworthiness—matter everywhere. Good content optimized for user intent performs well in traditional search and increases AI citation likelihood. Organizations adopting dual strategies are seeing results across both fronts.

The fundamental difference is stark. SEO drives traffic to websites. GEO delivers information directly within AI platforms, often without generating clicks at all. Testing strategies need complete overhauls to account for this shift.

Companies clinging to old methodologies while ignoring GEO experiments are setting themselves up for irrelevance. The game has changed, and the rulebook needs rewriting.